The rise of cloud computing – and Platform as a Service (PaaS) and Container as a Service (CaaS) offerings in particular – is changing the way companies deploy and operate their business applications. One of the most important challenges when designing cloud applications is choosing fully managed cloud services that reduce costs and time‑consuming operational tasks without compromising security.

This blog post shows you how to host applications on Microsoft Azure App Service and secure them with NGINX Plus to prevent attacks from the Internet.

Brief Overview of Microsoft Azure App Service

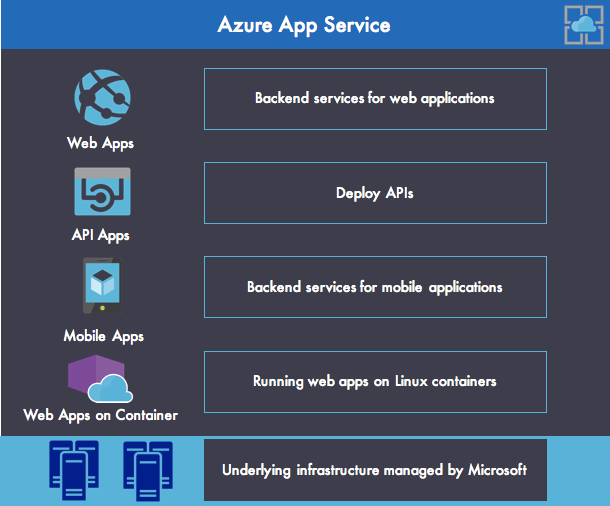

Microsoft Azure App Service is an enterprise‑grade and fully managed platform that allows organizations to deploy web, API, and mobile apps in Microsoft Azure without managing the underlying infrastructure, as shown in Figure 1. Azure App Service provides the following main features:

- Web Apps enables you to deploy and run web apps in various languages and frameworks (ASP.NET, Node.js, Java, PHP, and Python). It also manages scalable and reliable web applications using Internet Information Services (IIS) with comprehensive application management capabilities (monitoring, swapping from staging to production, deleting deployed applications, and so on). It also provides a Docker runtime for running web applications on Linux with containers.

- API Apps brings the tools for deploying REST APIs with CORS (Cross‑Origin Resource Sharing) support. Azure API Apps can be easily secured with Azure Active Directory, social network single sign‑on (SSO), or OAuth, with no code changes required.

- Mobile Apps provides a fast way to deploy mobile backend services that support essential features such as authentication, push notifications, user management, cloud storage, etc.

With Azure App Service, Microsoft provides a rich and fast way to run web applications on the cloud. Indeed, developers can develop their applications locally using ASP.NET, Java, Node.js, PHP, and Python and easily deploy them to Azure App Service with Microsoft Visual Studio or the Azure CLI. DevOps teams can also benefit from Azure App Service’s continuous deployment feature to deploy application releases quickly and reliably on multiple environments.

Applications on Azure App Service can access other resources deployed on Azure or can establish connections over VPNs to on‑premises corporate resources.

Understanding Azure App Service Environments

Basically, an application created with Azure App Service is exposed directly to the Internet and assigned to a subdomain of azurewebsites.net. For more security, you can protect your app with SSL termination, or with authentication and authorization protocols such as OAuth2 or OpenID Connect (OIDC). However, it is not possible to customize the network with fine‑grained outbound and inbound security rules or apply middleware such as a web application firewall (WAF) to prevent malicious attacks or exploits that come from the Internet.

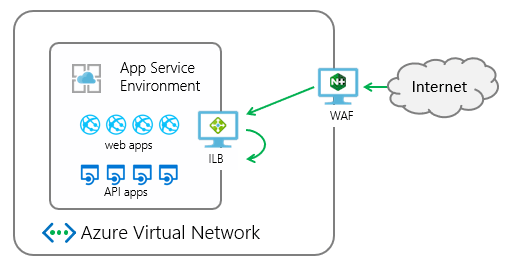

If you run sensitive applications in Azure App Service and want to protect them, you can use Azure App Service Environments (ASEs). An ASE is an isolated environment deployed into a virtual network and dedicated to a single customer’s applications. Thus, you gain more control over inbound and outbound application network traffic.

With ASEs you can deploy web, API, mobile, or functions apps inside a more secure environment at very high scale, as shown in Figure 2.

Creating a New ASE v2

There are two versions of the ASE: ASE v1 and ASE v2. In this post we’re discussing ASE v2.

You can create a new ASE v2 manually by using the Azure Portal, or automatically by using Azure Resource Manager.

When creating a new ASE, you have to choose between two deployment types:

- An external ASE exposes the ASE‑hosted applications through a public IP address.

- An ILB ASE exposes the ASE‑hosted applications on a private IP address accessible only inside your Azure Virtual Network. The internal endpoint is what Azure calls an internal load balancer (ILB).

In the following example, we’re choosing an ILB ASE to prevent access from the Internet. Thus, applications deployed in our ASE are accessible only from virtual machines (VMs) running in the same network. The following two commands use Azure Resource Manager and the Azure CLI to provision a new ILB ASE v2:

$ azure config mode arm

$ azure group deployment create my-resource-group my-deployment-name --template-uri https://raw.githubusercontent.com/azure/azure-quickstart-templates/master/201-web-app-asev2-ilb-create/azuredeploy.jsonSecuring Access to Apps in a Publicly Accessible ASE

If, on the other hand, you want your app to be reachable from the Internet, you have to protect it against malicious attackers that might attempt to steal sensitive information stored in your application.

To secure applications at Layer 7 in an ASE, you have two main choices:

- Azure Application Gateway

- NGINX Plus with the NGINX ModSecurity WAF module

(You can substitute a custom application delivery controller [ADC] with WAF capabilities, but we don’t cover that use case here.)

The choice of solution depends on your security constraints. On one hand, Azure Application Gateway provides a turnkey solution for security enforcement and doesn’t require you to maintain the underlying infrastructure. On the other hand, deploying NGINX Plus on VMs gives you a powerful stack with more control and flexibility to fine‑tune your security rules.

Choosing between Azure Application Gateway and NGINX Plus to load balance and secure applications created inside an ASE requires a good understanding of the features provided by each solution. While Azure Application Gateway works for simple use cases, for complex use cases it does not provide many features that come standard in NGINX Plus.

The following table compares support for load‑balancing and security features in Azure Application Gateway and NGINX Plus. More details about NGINX Plus features appear below the table.

| Feature | Azure Application Gateway | NGINX Plus |

|---|---|---|

| Mitigation capability | Application layer (Layer 7) | Application layer (Layer 7) |

| HTTP-aware | ✅ | ✅ |

| HTTP/2-aware | ❌ | ✅ |

| WebSocket-aware | ❌ | ✅ | SSL offloading | ✅ | ✅ |

| Routing capabilities | Simple decision based on request URL or cookie‑based session affinity | Advanced routing capabilities |

| IP address-based access control lists | ❌ (must be defined at the web-app level in Azure) | ✅ |

| Endpoints | Any Azure internal IP address, public Internet IP address, Azure VM, or Azure Cloud Service | Any Azure internal IP address, public Internet IP address, Azure VM, or Azure Cloud Service | Azure Vnet support | Both Internet‑facing and internal (Vnet) applications | Both Internet‑facing and internal (Vnet) applications |

| WAF | ✅ | ✅ |

| Volumetric attacks | Partial | Partial |

| Protocol attacks | Partial | Partial | Application-layer attacks | ✅ | ✅ |

| HTTP Basic Authentication | ❌ | ✅ |

| JWT authentication | ❌ | ✅ |

| OpenID Connect SSO | ❌ | ✅ |

As you can see, NGINX Plus and Azure Application Gateway both act as ADCs with Layer 7 load‑balancing features plus a WAF to ensure strong protection against common web vulnerabilities and exploits.

NGINX Plus provides several additional features missing from Azure Application Gateway:

- URL rewriting and redirecting – With NGINX Plus you can rewrite the URL of a request before passing it to a backend server. This means you can alter the location of files or request paths without modifying the URL advertised to clients. You can also redirect requests. For example, you can redirect all HTTP requests to an HTTPS server.

- Connection and rate limits – You can configure multiple limits to control traffic to and from your NGINX Plus instances. These include limits on the number of inbound connections, the rate of inbound requests, the connections to backend nodes, and the rate of data transmission from NGINX Plus to clients.

- HTTP/2 and WebSocket support – NGINX Plus supports HTTP/2 and WebSocket at the application layer (Layer 7). Azure Application Gateway does not; instead Azure Load Balancer supports them at the network layer (Layer 4), where TCP and UDP operate.

For additional security, you can deploy Azure DDoS Protection to mitigate threats at Layers 3 and 4, complementing the Layer 7 threat‑mitigation features provided by Azure Application Gateway or NGINX Plus.

Using NGINX Plus with Azure App Service to Secure Applications

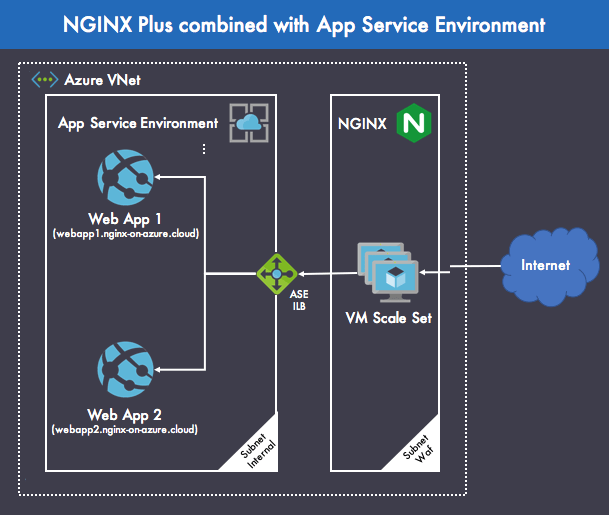

Figure 3 shows how to combine NGINX Plus and Azure App Service to provide a secure environment for running business applications in production. This deployment strategy uses NGINX Plus for its load balancing and WAF features.

The deployment combines the following components:

- Azure Virtual Network (VNet) – Represents a virtual network in the Azure cloud dedicated to your organization. It provides a logical isolation that allows Azure resources to communicate securely with each other in a virtual network. Here, we have defined two subnets: Internal for web applications that are not exposed directly to the Internet and Waf for NGINX Plus and the infrastructure that underlies it.

-

Azure App Service Environment – This sample deployment utilizes two sample web applications – Web App 1 and Web App 2 – to demonstrate how to secure and load balance different web apps with NGINX Plus. In NGINX Plus, you distribute requests to different web applications by configuring distinct

upstreamblocks, and do content routing based on URI withlocationblocks. The following shows the minimal NGINX Plus configuration that meets this goal (here all requests go to the same upstream group):upstream backend { server IP-address-of-your-ASE-ILB; } server { location / { proxy_set_header Host $host; proxy_pass http://backend; } } - NGINX Plus – Load balances HTTP(S) connections across multiple web applications. Deploying NGINX Plus in a VM gives you more control over the infrastructure than other Azure services offer. For example, with a VM you can choose the operating system (Linux or Windows) which runs inside an isolated virtual network. Indeed, Azure VMs are available for all of the Linux distros that NGINX Plus supports (except Amazon Linux, for obvious reasons).

- Azure VM scale sets – VM scale sets are an Azure compute resource that you can use to deploy and manage a set of identical VMs. You can configure the size of the VM and assign the VM to the right VNet. All the VMs that run inside the scale set are load balanced by an Azure Load Balancer that provides TCP connectivity between the VM instances. Here, each VM in the scale set is based on the NGINX Plus image available from Azure Marketplace. Scale sets are designed to provide true autoscaling.

Azure also supports resource groups as an easy way to group the Azure resources for an application in a logical manner. Using a resource group has no impact on infrastructure design and topology, and we don’t show them here.

NGINX Plus High Availability and Autoscaling with Azure VM Scale Sets

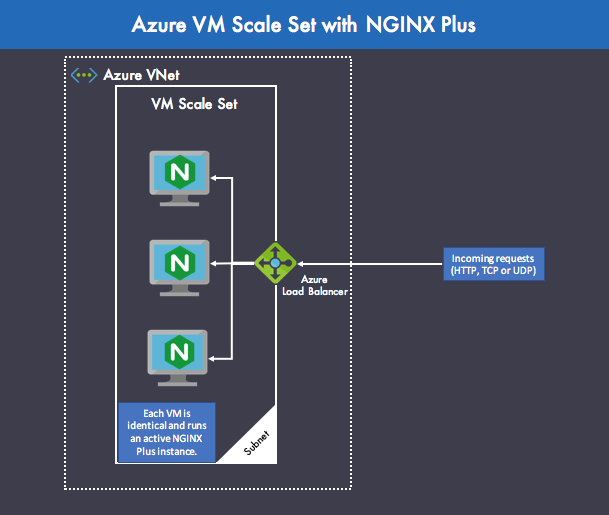

An Azure VM scale set gives you the power of virtualization with the ability to scale at any time without having to buy and maintain the physical hardware that supports scaling. However, you are still responsible for maintaining the VM by performing tasks such as configuring, patching, security updating, and installing the software that runs on it.

In the architecture shown in Figure 4, NGINX Plus instances are deployed for active‑active high availability inside an Azure VM scale set. An active‑active setup is great because all of the NGINX Plus VMs can handle an incoming request routed by Azure Load Balancer, giving you cost‑efficient capacity.

With Azure VM scale sets, you can also easily set up autoscaling of NGINX Plus instances based on average CPU usage. You need to take care to synchronize the NGINX Plus config files in this case. You can use the NGINX Plus configuration sharing feature for this purpose, as described in the NGINX Plus Admin Guide.

Summary

By using Azure App Service for your cloud applications and NGINX Plus in front of your web apps, API, and mobile backends, you can load balance and secure these applications at a global scale. By using NGINX Plus in conjunction with Azure App Service, you get a fully load‑balanced infrastructure with a high level of protection against exploits and attacks from the web. This ensures a robust design to run critical applications in production in a secure way.

Resources

Web Apps overview (Microsoft)

Introduction to the App Service Environments (Microsoft)

Create an application gateway with a web application firewall using the Azure portal (Microsoft)

Compare features in NGINX Open Source and NGINX Plus (NGINX)

HTTP Load Balancing (NGINX)

Guest co‑author Cedric Derue is a Solution Architect and Microsoft MVP at Altran. Guest co‑author Vincent Thavonekham is Microsoft Regional Director and Azure MVP at VISEO.