[Editor – The solution described in this blog relies on the NGINX Plus Status and Upstream Conf modules (enabled by the status and upstream_conf directives). Those modules are replaced and deprecated by the NGINX Plus API in NGINX Plus Release 13 (R13) and later, and will not be available after NGINX Plus R15. For the solution to continue working, update the roles and scripts that refer to the two deprecated modules.]

Service discovery is a key component of most distributed systems and service‑oriented or microservices architectures. Because service instances have dynamically assigned network locations which can change over time due to autoscaling, failures, or upgrades, clients need a sophisticated service discovery mechanism for tracking the current network location of a service instance.

This blog post describes how to dynamically add or remove load‑balanced servers that are registered with Consul, a popular tool for service discovery. The solution uses NGINX Plus’ dynamic reconfiguration API, which eliminates the need to reload NGINX Plus configuration file when changing the set of load‑balanced servers. With NGINX Plus Release 8 (R8) and later, changes you make with the API can persist across restarts and configuration reloads. [Editor – This paragraph refers to the deprecated Upstream Conf module. The description also applies to the NGINX Plus API, however.]

Consul, developed by HashiCorp, is most widely used for discovering and configuring services in your infrastructure. It also provides – out of the box – failure detection (health checking), a key/value store, and support for multiple data centers.

To make it easier to combine the NGINX Plus on‑the‑fly reconfiguration API with Consul, we’ve created a GitHub project called consul-api-demo, with step‑by‑step instructions for setting up the demo described in this blog post. In this post, we will walk you through the proof of concept. Using tools like Docker, Docker Compose, Homebrew, and jq, you can spin up a Docker–based environment in which NGINX Plus load balances HTTP traffic to a couple of hello‑world applications, with all components running in separate Docker containers.

Editor – Demos are also available for other service discovery methods:

- Service Discovery for NGINX Plus Using DNS

SRVRecords from Consul - Service Discovery for NGINX Plus with etcd

- Service Discovery for NGINX Plus with ZooKeeper

How the Service Discovery Demo Works

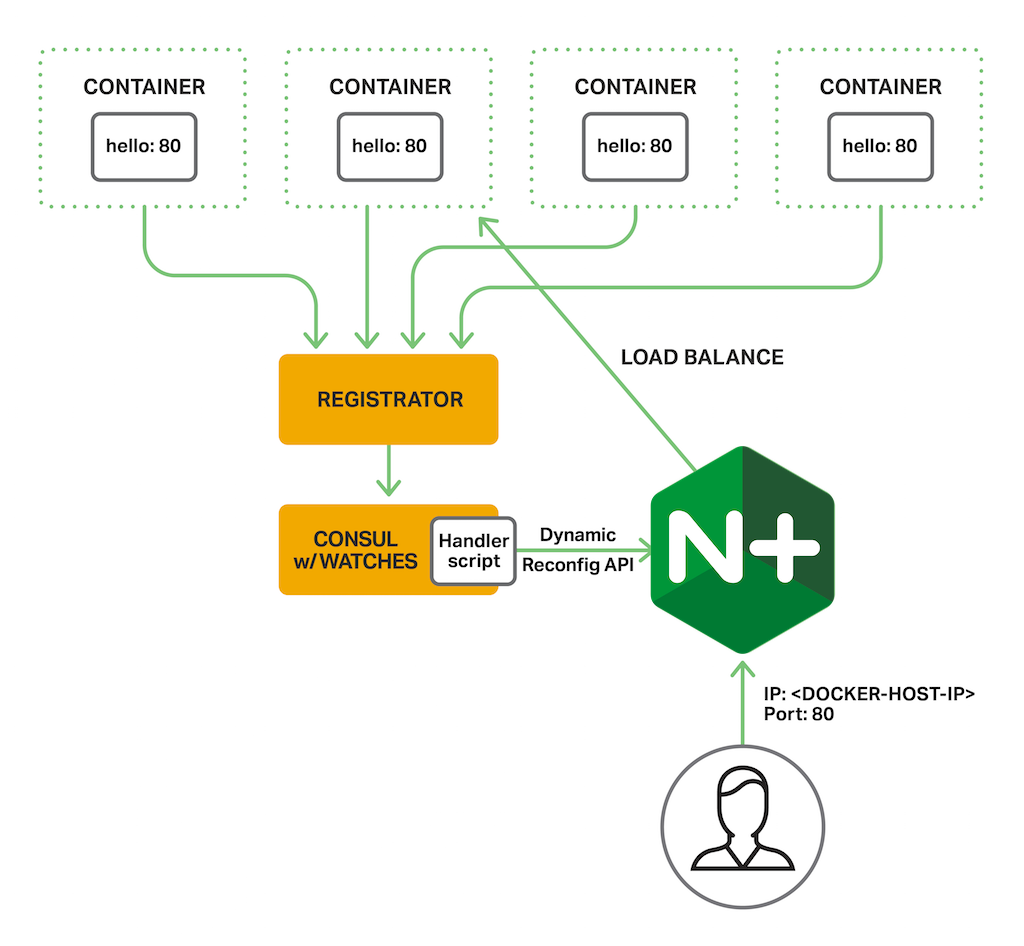

First we spin up a separate Docker container for each of the following apps:

- Consul – Performs service discovery.

- Registrator – Registers services with Consul. Registrator monitors for containers being started and stopped and updates Consul when a container changes state.

- hello – Simulates a backend server. This is another project from NGINX, Inc., an NGINX web server that serves an HTML page listing the client IP address, the request URI, and the web server’s hostname, IP address, port, and local time. (The diagram shows four instances of this app.)

- NGINX Plus – Load balances the backend server apps.

For service discovery, the NGINX Plus container listens on the public port 80, and the built‑in NGINX Plus live activity monitoring dashboard on port 8080. The Consul container listens on ports 8300, 8400, 8500, and 53. It advertises the IP address of the Docker Host (HOST_IP) as the address for other containers to use when communicating with it.

Registrator monitors Docker for new containers that are launched with exposed ports, and registers the associated service with Consul. By setting environment variables within the containers, we can be more explicit about how to register the services with Consul. For each hello‑world container, we set the SERVICE_TAGS environment variable to production to identify the container as an upstream server for load balancing by NGINX Plus. When a container quits or is removed, Registrator removes it from the Consul service catalog automatically.

Finally, we configure Consul watches in a JSON file included in consul-api-demo, so that every time there is an update to the list of registered services, an external handler (script.sh) is invoked. This bash script gets the list of all current NGINX Plus upstream servers, uses the Consul services API to loop through all the containers registered with Consul that are tagged production, and uses NGINX Plus’ dynamic reconfiguration API to add them to the NGINX Plus upstream group if they’re not listed already. It also then removes from the NGINX Plus upstream group any production‑tagged containers that are not registered with Consul. [Editor – The script is the part of the solution that makes the most use of the deprecated NGINX Plus Status and Upstream Conf modules.]

Summary

Using a script like the one provided in our demo automates the process of upstream reconfiguration in NGINX Plus by dynamically reconfiguring your upstream groups based on the services registered with Consul. This automation also frees you from having to figure out how to issue the API calls correctly, and reduces the amount of time between the state change of a service in Consul and its addition or removal within the NGINX Plus upstream group.

Check out our other blogs about service discovery for NGINX Plus:

- Service Discovery for NGINX Plus Using DNS

SRVRecords from Consul - Service Discovery for NGINX Plus with etcd

- Service Discovery for NGINX Plus with ZooKeeper

And try out automated reconfiguration of NGINX Plus upstream groups using Consul for yourself:

- Download

consul-api-demofrom our GitHub repository - Start a free 30‑day trial of NGINX Plus today or contact us to discuss your use cases